Home » IAG: Why is a maturity model essential?

Ocean A hug, the exchange platform for open-source learning models, there are 48,000 Large Language Models (LLMs) and just as many ways to use them. Should you therefore go for the most popular? Or the most downloaded? Let's use the following analogy: you are a farmer, do you use a Ferrari to plough the fields? Or: you are a data architect, do you use a tractor to go to work? No. It's the same for Generative AI (IAG). Knowing/being able to identify your needs is crucial. And for that, we offer you a maturity model.

It can't be stressed enough: there's nothing magical about Generative AI. LLMs are programmes based on statistical training which, when presented with a sentence, will propose the most probable following word(s) in that context. From a certain point of view, we're at the level of «pub chat» where an individual would be repeating what they heard and remembered from a message on the radio that very morning.

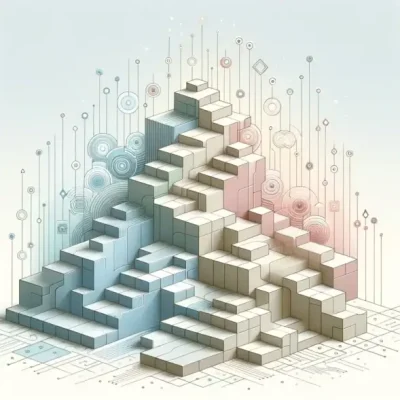

It is interesting to note that the ability of LLMs to «reason» has emerged by considerably increasing their training, that is to say, effectively their «memory.» In itself, this is remarkable. However, this does not necessarily provide the expected answers in the particular context of a project or a company. We can therefore distinguish different ways of interacting with LLMs, from the most basic use of this knowledge base to a level that makes greater use of reasoning ability.

This corresponds to different levels of use, and maturity.

The first level corresponds to a basic, direct use of LLMs. We are at the level of the generic question. This is the famous «prompt engineering» which is the art of asking the right questions to the machine. It is now established that the quality of the answers will not be the same depending on how the questions are asked. In general, the answer will depend on the training corpus and the specialization of the LLM for tasks such as code generation. The request can then be defined as:

This solution is obviously the simplest but highly dependent on the organisation behind the LLM.

The second level is that of a solution for which the prompt will be enriched with information. This is the famous RAG (Retrieval Augmented Generation). In practice, this means telling the machine:

Many chatbots rely on this technique. The search for relevant documents being based on Vector bases which allow us to identify text extracts that are semantically close to the question.

This method assumes prior vectorisation of the knowledge base, meaning the mathematical representation of texts and questions. A (mathematical) distance measure will be used to find the document that provides the most relevant elements for the answer.

A variation of this enhancement is fine-tuning. While very few organisations are capable of aligning resources like those of Meta or OpenAI, «low-cost» techniques do exist. These consist, as with any neural network, of freezing the weights of the lower layers and working only on the upper (final) layers, often by reducing the mathematical precision. In a way, this is like a trainee learning company-specific concepts «on top» of their university knowledge.

By reducing calculation precision, the number of possible operations on a given processor will be multiplied. The gains in calculation speed are considerable (often by a factor of 10 to 20) and will allow the LLM to be «taught» the main concepts of your organisation.

The third level is where the LLM will acquire an additional level of skill. Here, the typical question could be:

Information retrieval will be based on «agents» (to put it simply, APIs) for which the method of querying will have been described. It will then be possible to connect the LLM to different sources such as databases, or even sensors within the framework of an IoT-type project. This will give a dynamic, real-time dimension to processing as opposed to static knowledge.

We will typically rely on frameworks such as LangChain or LlamaIndex to orchestrate the various information searches.

Finally, the fourth level is arguably the most advanced. The idea here is to fully utilise the LLM's «understanding» capability, and more specifically, its ability to extract concepts and follow a line of reasoning, the closest English expression being «connecting the dots». An example of an application is CSR, where it may be important to know your nth-tier suppliers to determine if they are linked to dubious ethical practices. Another example is risk assessment and, more generally, any information search requiring «bouncing» from one piece of information to the next. The typical question here would then be:

Here, the dynamic aspect is further enhanced. It's not simply about the data but the complete process, which will evolve as information is found. It is important at this stage to specify the research directions. Indeed, combinatorics can quickly become explosive without producing convincing results. It is preferable to steer the LLM towards what will create value, according to the needs of each domain.

So, for CSR risk analysis, we can ask the LLM to «dig» along ecological or ethical lines. In the context of an industrial company, we can prioritise links between subcontractors and the origin of components (in cases of sensitivity to ITAR, for example).

So, beyond the media buzz generated by LLMs, the issue of using generative AI ultimately comes down, as is often the case, to control of information and its use. Various options are therefore available to enrich the knowledge base of LLMs and thus take into account the specificities of the company.