Home » AI Act: 10 steps for effective compliance

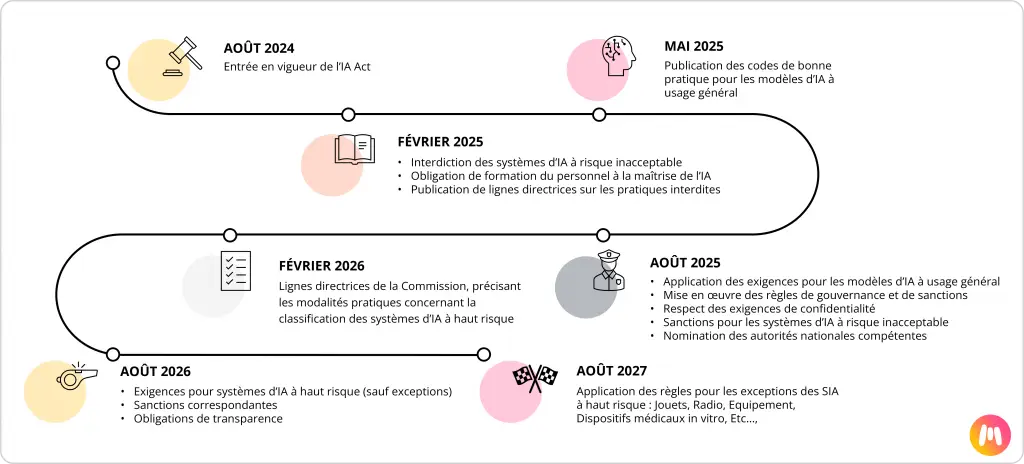

The’Artificial Intelligence Act AI Actest le The first European regulatory framework dedicated to artificial intelligence. It came into effect in June 2024, marking a key milestone in the AI Act compliance for European companies, with a phased rollout until 2027. It imposes specific obligations on businesses according to the level of risk associated with their AI systems: unacceptable, high, limited, or minimal. From August 2025, certain requirements will become enforceable, notably the prohibition of unacceptable risk AI, transparency obligations, and requirements for staff training.

It is therefore crucial to initiate a compliance process without delay, even in a context where certain guidelines are still awaited. Companies must get their affairs in order now. Anticipation is strategic: delay equals legal and reputational risk, but also loss of competitiveness.

The first step is to catalogue all the AI systems used within your company:

ECreating a mapping allows each use case to be positioned within the risk categories defined by the AI Act:

Need help mapping your AI usage? Our experts can guide you in structuring this key first step.

Certain applications are now prohibited: social scoring, non-targeted extraction of facial images, real-time biometric recognition in public spaces without specific authorisation, emotion assessment systems in schools or at work. Any organisation must verify that none of its current uses fall into this category.

Once your systems have been identified, it is necessary to prioritise your efforts. High-risk systems concentrate the majority of the obligations: documentation, robustness, strict governance.

Examples: An automated recruitment tool falls within the “high risk” scope. A marketing chatbot is classified as “low risk”.

Your roadmap must therefore begin with the critical use cases, without neglecting the others. The following are considered high-risk : The systems used in education, employment, access to essential services, justice; ; Certain safety components subject to other European regulations; ; In finance, solvency assessment or pricing in health and life insurance:Certains cas nécessitent un plan de conformité prioritaire.

The new rèimplementation involves, depending on IA usage, to monitor «ethical" biasesandsocietal». There is no repository of biases. Therefore, we must prioritise implementationconformity according to usage and the criticality of Types of bias.

Example of AI bias typology in the banking sector |

As an example, the banking sector and the processes of’OCTroye credit are subject to particular attention. Thisthe I'mexplain, for example, for AI systems, the implementation in credit analysis or in credit scoring, to develop A bias repository to help modelling teams with compliance.

The suppliers those who develop or train AI systems must in particular : Implement a risk management system, ensure high-quality data governance, Technically document the models, ensure human oversight, robustness, and cybersecurity.

The Deployers (those who integrate AI into their processes) must use these systems in accordance with instructions, keep certain logs and, in some cases, carry out an impact assessment on fundamental rights.

LLMs (large language models) presenting systemic risk are subject to enhanced testing and obligations.

Generative AI, such as large language models, must be documented, respect copyright, publish a summary of training data, and provide information to integrators.

AI Act compliance must be coordinated with GDPR and existing data management frameworks. In France, the CNIL recommends coordinating the AI Act impact assessment with the GDPR impact assessment, and involving legal, DPO, data, business, and IT departments in a clear governance framework.

It cannot rest on a single direction. It must be integrated into clear governance, involving: Legal and Risk Managers (interpretation of regulation), the CIO/CDOs (data and infrastructure management),Jobs and HR (use case specification),’Innovation (business opportunities).

Several models exist: centralised, federated, or integrated within a DevOps/MLOps approach. The choice depends on the size and culture of your organisation.

JEMS helps organisations integrate AI Act compliance into their Data & AI governance, with a clear and pragmatic approach.

Compliance is not just down to lawyers. Business teams, HR, data scientists, and IT managers must understand the new obligations. Sector-specific training (healthcare, finance, industry) facilitates operational adoption.

Supervision will be carried out by national market surveillance authorities and a dedicated European office. In France, the ACPR is preparing to play a role in the financial sector. Companies must be ready to produce their documentation and impact analyses in the event of an inspection.

Preparing today with a regulatory maturity grid is a good way to avoid unpleasant surprises.

The AI Act is gaining momentum.

Compliance requires tangible deliverables: technical documentation, logs, evidence of robustness, training data summaries, and transparency procedures for end-users. These elements must be integrated into existing business processes and maintained over time.

Complying with the AI Act may seem complex. At JEMS, we've designed PATH2AI COMPLIANCE, a unique device that combines flexibility and interdisciplinary expertise.

JEMS is its clients' global partner, supporting them from start to finish across the entire Data & AI value chain. We help European businesses organise and implement their Data & AI compliance programmes. Our expertise is built on operational experience and the successful completion of complex regulatory compliance projects (GDPR, BCBS239, Basel IV, Solvency II, IFRS, EMIR, AI Act …). JEMS disposes of many references on projects in compliance succeed in programmes ofData Governance, Data Quality Management, Data Catalogue, Certification Data & IA Factory, RGP and AI Innovation which allow us to benefit you from our expertise on the’AI Act.

When does the AI Act come into force?

Entry into force: August 2024. Phased obligations between February 2025 and August 2027.

Which practices are forbidden?

Social scoring, mass facial data extraction, real-time biometric recognition without consent, emotion detection systems in schools or at work.

What is a high-risk system?

Systems used in education, employment, finance, healthcare, justice, or certain security components.

What are the penalties for non-compliance?

Fines proportionate to the company concerned, amounting to several per cent of its turnover.

The AI Act is transforming the management of artificial intelligence in Europe. Organisations that prepare today will be better equipped to face scrutiny, and also more credible to their clients and partners. Compliance is not an option: it is a competitive advantage.

With PATH2AI COMPLIANCE, JEMS offers pragmatic, progressive support focused on your use cases to transform regulatory constraints into opportunities for structuring and trust.