Home » Managing hallucinations in AI

Large Language Models (LLMs) offer fascinating prospects, but their reliability remains a crucial challenge. At JEMS, we explore techniques to limit the risks associated with «hallucinations», in order to ensure relevant and robust results in critical contexts. Discover our methodology and the solutions we favour.

LLMs are statistical machines. They predict and generate the next word to be emitted based on the probability linked to the context provided to them, that is, the preceding words. The results produced since 2022 are undoubtedly spectacular, as are the «failures» which are sometimes known as «hallucinations». The fact that a word is «probable» does not mean it is «correct».

While these hallucinations may prove «acceptable» in the context of creative or imaginative work, where they can allow for the consideration of aspects one might not otherwise think of, they can be disastrous in cases where the quality of the output is important. For example:

The simplest technique to avoid these drawbacks is to set the «temperature» to zero. This parameter actually represents the statistical representation at the output of the neural network. The higher the «temperature», the closer the statistical weights of the candidate words become, and therefore the more the text can go in one direction or another.

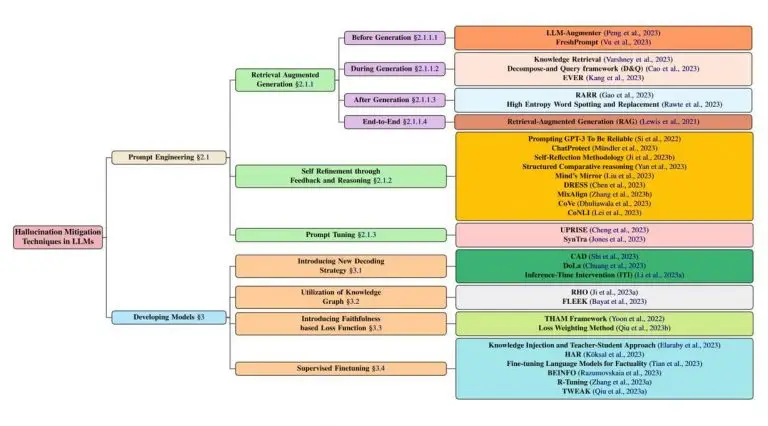

Unfortunately, playing with this single setting will not be enough and it will be necessary to find other solutions to control the production of LLMs. Many scientific articles have been written on this subject, and a synthesis is available here (https://arxiv.org/html/2401.01313v2). The authors distinguish between two main categories of methods:

From our point of view, model-based methods are both:

They are more complex, as in most cases they require a training base. Whether in the classic form of questions/answers (often used in these fine-tuning cases) or by relying on the very structure of the models:

They are subject to the same risks, because ultimately, there is no control over the generation, which is always statistical, in the output.

The other techniques presented in the study mentioned follow a different approach, focusing on the question and the information provided. The LLM is not being questioned here, which offers two advantages:

In fact, these techniques rely on «off-the-shelf» solutions and instead seek to «frame» and «control» the production of an LLM, even if it means revising them.

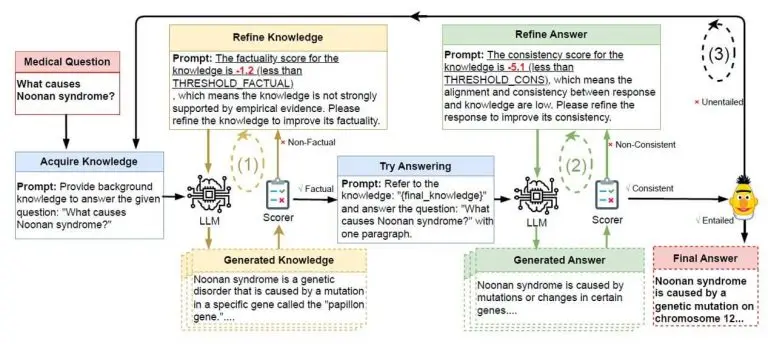

At its core, we'll find «Guardrails»-type mechanics. This involves having a feedback loop (a bit like servomechanisms), that is to say, a mechanism controlling production relative to expectations. As long as the quality does not reach the expected level, the LLM is prompted to regenerate a new response.

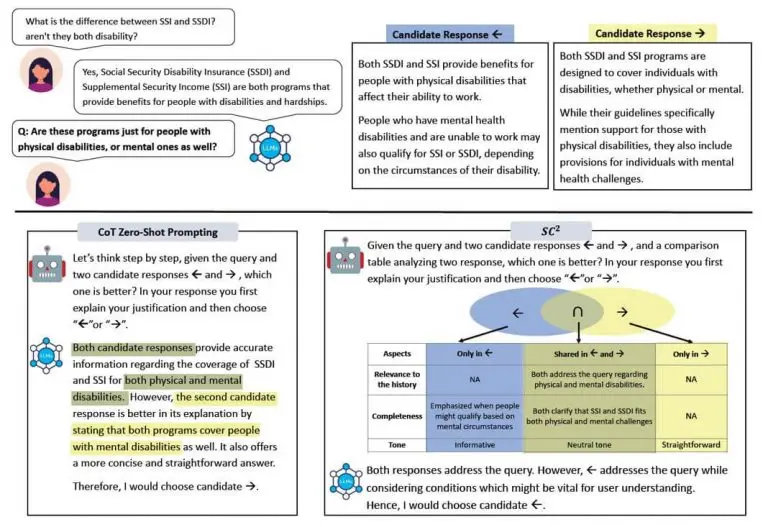

Several methods designated as self-refinement through feedback and reasoning are mentioned in the article. These include:

This last method is also reminiscent of the «Chain Of Verification» (CoVe), which aims to self-verify its own generations by generating questions and answering them. Similarly, CoNLI (Chain of Natural Language Inference) «self-critiques» its answers against the upstream information provided.

In general terms, one can think of reflexive, that is, prompting the LLM to check its own answers against the provided documents and the question asked. As a human, this is akin to proofreading what you've written before sending it!

Prompt-based methods or output management offer significant advantages in terms of flexibility, stability, or even simplicity compared to direct adjustments of LLMs. These approaches allow us to propose robust, adaptable, and accessible solutions, without depending on the technical complexity of the models themselves.

Hallucinations are therefore part of the inherent risks of LLMs. While in creative fields, this can prove to be an advantage, there are unfortunately other areas where this can become unacceptable. Several techniques are available, and from JEMS's perspective, it is preferable to favour those that are independent of LLMs and aim at error correction, rather than hoping for a «perfect» LLM.

However, one common point should be noted:

Our approach is based on the idea that mastering AI requires a structured methodology, integrating solutions tailored to each business context. By working with our clients, we develop tools that maximise the opportunities offered by AI while reducing uncertainties.

And how do you frame your AI projects to ensure reliable and relevant outcomes? Our experts are here to guide you through this transformation.